Introduction

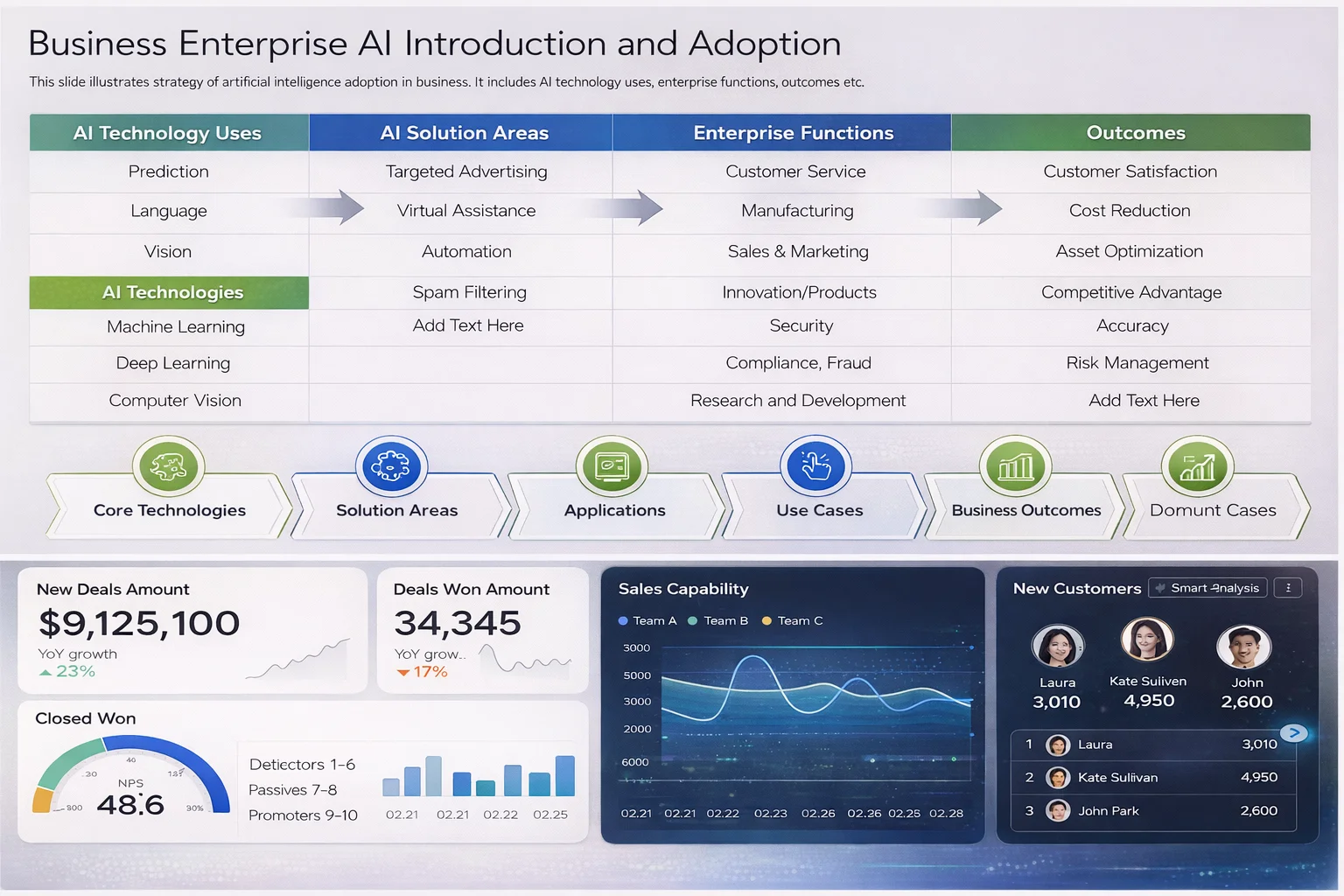

Artificial Intelligence is no longer experimental. In 2026, it functions as operational infrastructure embedded across enterprise systems, from predictive maintenance and fraud detection to generative AI copilots and autonomous workflows. While AI budgets continue to increase, many initiatives still stall at the pilot stage. The issue is rarely model performance. It is vendor selection. Choosing the right AI partner today requires far more than reviewing product demos or comparing pricing tiers.

Enterprises must rigorously evaluate architectural maturity, governance and security standards, scalability under production load, regulatory compliance, data strategy alignment, and long term partnership viability. This guide presents a structured AI vendor evaluation framework built specifically for CIOs, CTOs, procurement leaders, and digital transformation executives navigating enterprise AI adoption in 2026.

Define the Business Objective Before Reviewing Vendors

| Category | Details | Why It Matters in 2026 |

|---|---|---|

| Primary Business Outcome | Revenue uplift · Cost reduction · Operational efficiency · Compliance automation | Vendor selection must align with measurable ROI, not technical novelty |

| Strategic vs Experimental | Core transformation initiative or exploratory pilot | Strategic initiatives require production-grade architecture and long-term vendor viability |

| Mission-Critical Integration | Will AI integrate into ERP, CRM, supply chain, finance, or compliance systems | Determines required reliability, uptime SLAs, security posture, and scalability |

| Defined KPIs | Quantifiable targets such as percentage cost savings, processing time reduction, fraud detection accuracy, conversion uplift | Prevents evaluation based on feature comparison instead of outcome alignment |

| Workflow Automation Goals | AI agents handling repetitive tasks, document processing, decision routing | Requires orchestration capability and integration depth |

| Predictive Analytics Objectives | Forecasting, anomaly detection, structured enterprise data modeling | Demands strong data engineering and model governance maturity |

| Multilingual AI Copilots | Internal knowledge assistants, employee copilots, customer-facing assistants | Requires NLP maturity, contextual grounding, and enterprise-grade RAG architecture |

| Computer Vision Use Cases | Manufacturing inspection, logistics tracking, warehouse monitoring | Necessitates real-time inference capability and edge deployment options |

| AI-Powered Customer Engagement | Conversational AI, personalization engines, intelligent recommendation systems | Requires scalability, latency optimization, and CRM integration capability |

| Evaluation Priority | Business fit before technical sophistication | Ensures alignment with enterprise transformation strategy rather than vendor marketing strength |

Assess Technical Architecture and Scalability

A credible AI vendor in 2026 must demonstrate architectural transparency.

• Not dependent on a single LLM provider

• Supports multi model routing based on cost latency or risk

• Flexible across OpenAI open source and sovereign models

• Clear model abstraction layer with failover logic

• Cloud native with optional hybrid deployment

• GPU optimization strategy clearly defined

• Auto scaling for inference and pipelines

• Multi zone failover with defined RTO and RPO

• Load tested and monitored in production

• CI CD for models and pipelines

• Version control for prompts and models

• Rollback strategy with monitoring and alerts

• Automated retraining with drift detection

• Full audit logging and governance

If a vendor cannot clearly explain their deployment architecture and reliability strategy, production readiness is doubtful.

Evaluate Data Governance and Compliance

In 2026, compliance risk outweighs experimentation risk, making data governance a primary evaluation criterion for any AI vendor. Enterprises must verify strict data residency controls, strong encryption at rest and in transit, granular role based access management, comprehensive model audit logs, and full prompt and response traceability. Vendors should also demonstrate formal bias detection and mitigation processes embedded within their model lifecycle.

For organizations operating in regulated markets, alignment with globally recognized standards such as ISO frameworks, the NIST AI Risk Management Framework and the European Union AI Act is essential. Mature vendors will provide documented compliance mappings that clearly show how their architecture and processes align with these standards. Verbal assurances are insufficient without structured evidence, audit artifacts, and policy documentation.

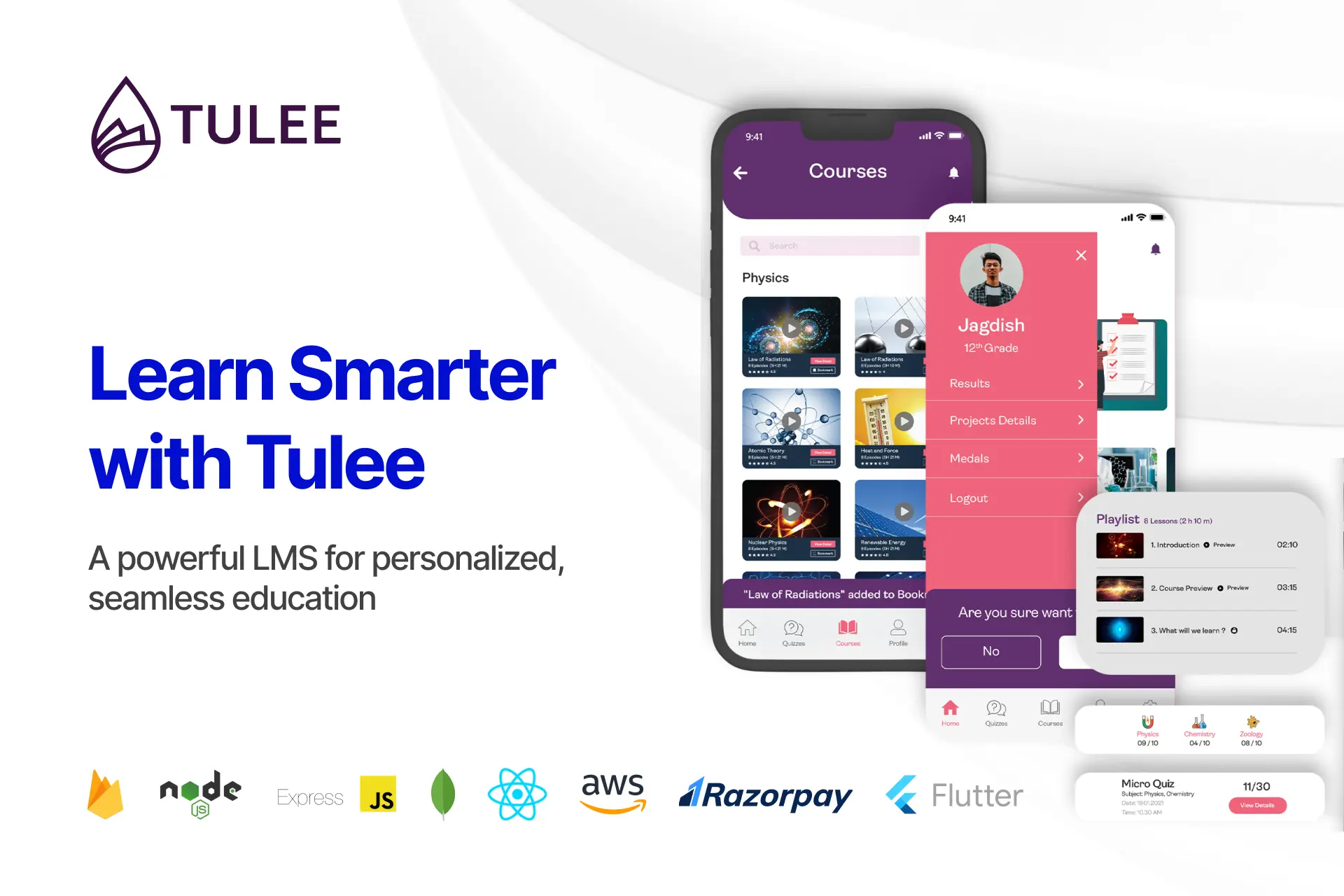

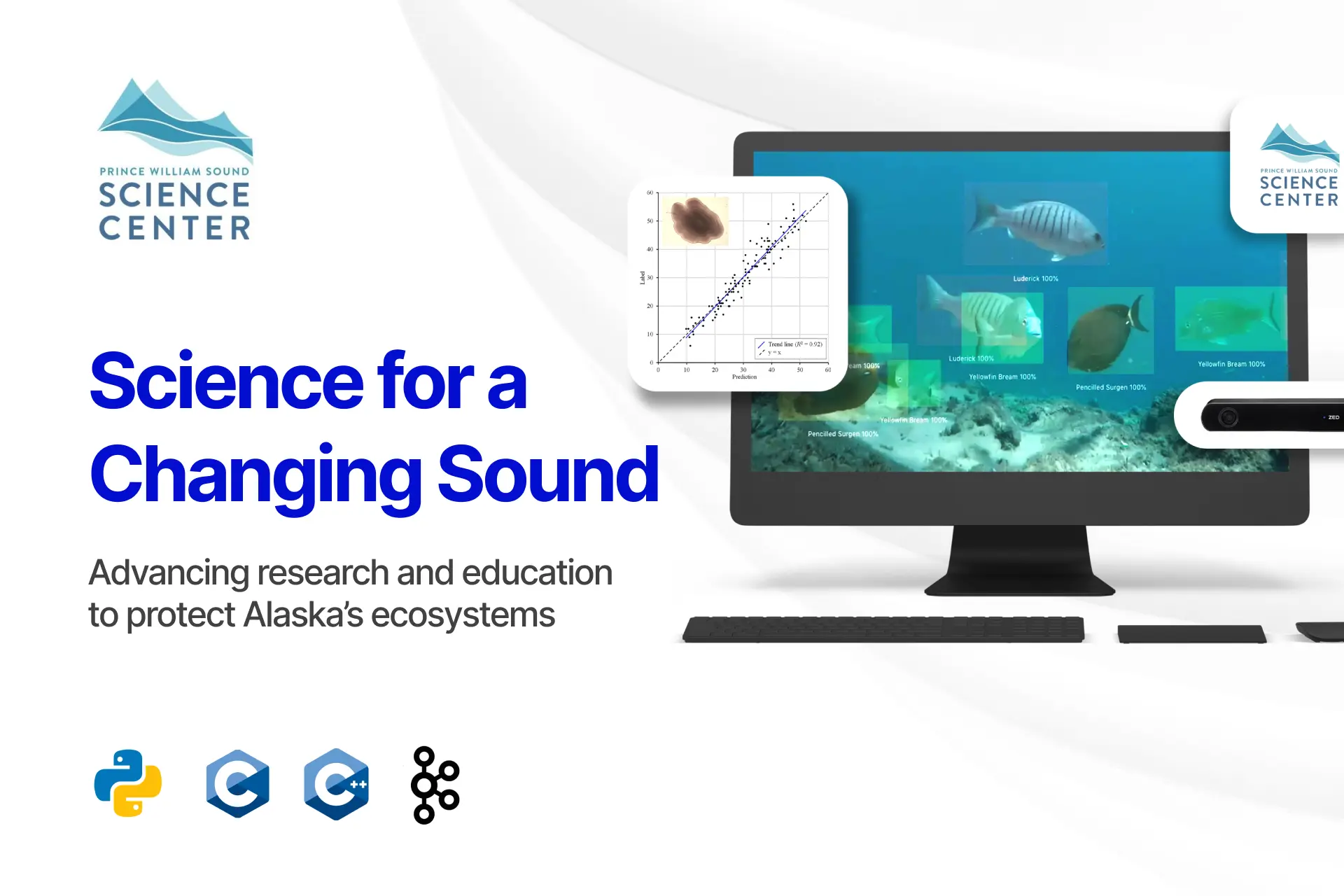

Review Real World Enterprise Deployments

In 2026, a proof of concept is no longer a differentiator. Enterprises should ask vendors what production systems are currently live, which industries they have successfully deployed in, whether they can provide measurable ROI outcomes, what the average deployment timeline has been, and what failure cases they encountered and resolved.

Serious AI vendor due diligence requires tangible evidence, including detailed case studies, verifiable client references, clear architecture diagrams, and performance benchmarks. Without documented production success and measurable impact, vendor claims should not be considered enterprise ready. For a framework on how to structure and assess these enterprise-grade capabilities, see the guidance at https://www.nyxwolves.com/.

Examine Integration Capability

Most AI failures do not occur at the model layer. They occur during integration.

In enterprise environments, AI must operate inside existing ecosystems that include ERP systems, CRM platforms, legacy warehouse management systems, custom internal dashboards, and centralized data lakes. The correct evaluation approach is to assess integration as a structured workflow rather than a feature checklist.

Identify all upstream and downstream systems the AI solution must interact with, including transactional systems, reporting layers, and identity providers.

Verify REST API maturity, including versioning standards, authentication methods, rate limiting, and structured error handling. Confirm webhook support for real time event notifications and bidirectional communication.

Assess whether the vendor supports event driven architecture using queues or message brokers to ensure scalability and resilience. AI should respond to business events rather than rely solely on polling or manual triggers.

Confirm compatibility with enterprise SSO providers such as SAML or OAuth based systems. Validate role based access enforcement and directory integration with corporate identity providers.

Ensure the AI solution integrates directly within existing user interfaces, dashboards, or operational systems. In 2026, AI must augment existing workflows, not operate as a standalone portal that forces behavioral change.

If integration requires heavy customization, manual workarounds, or parallel systems, long term adoption risk is high.

Planning an Enterprise AI Initiative in 2026

Speak with our AI architecture team to assess feasibility, compliance readiness, and cost modeling before vendor selection.

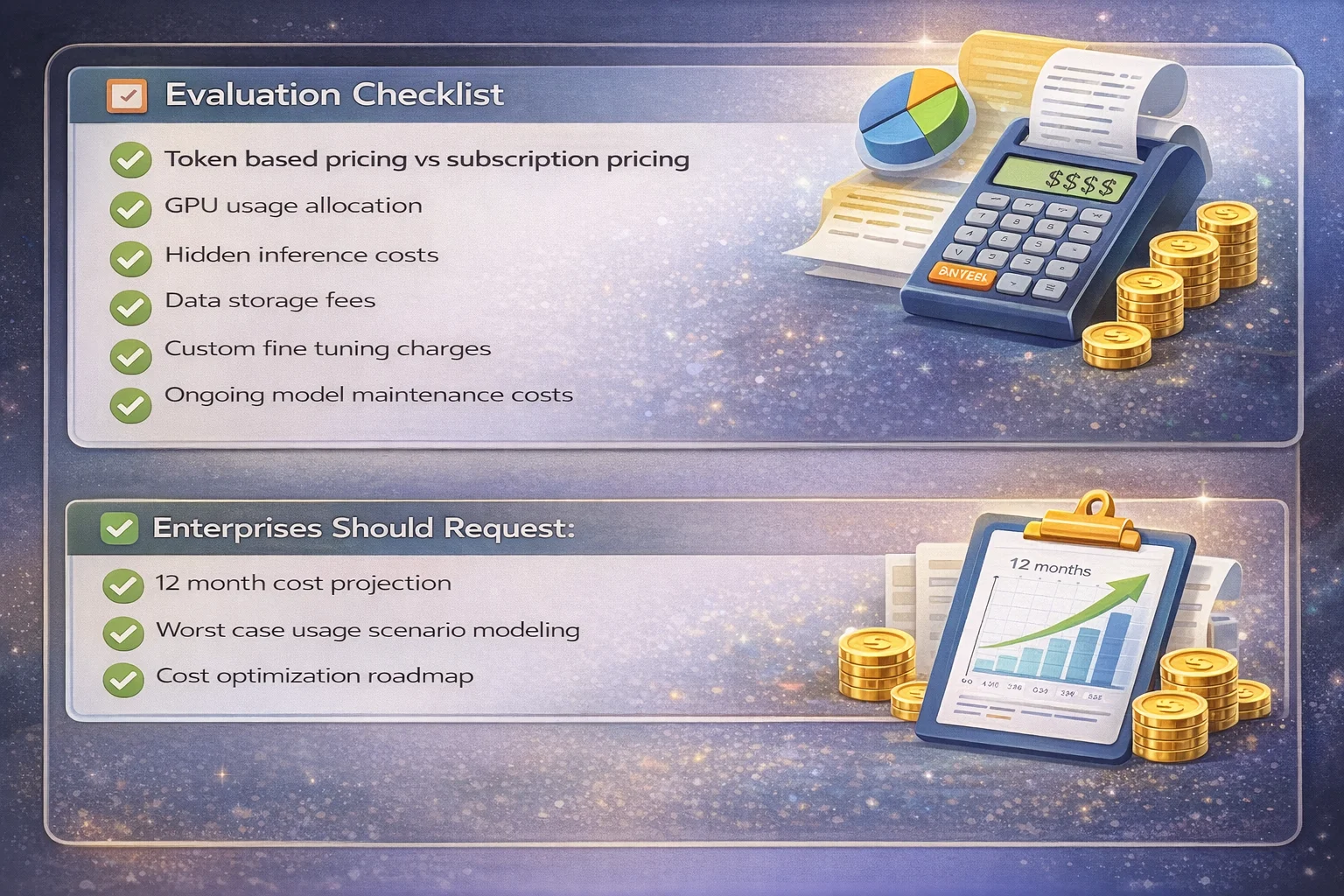

Analyze AI Vendor Transparency in Cost Structure

This is especially critical for GenAI heavy deployments.

Evaluate AI Talent Depth and Team Structure

ML engineers design, fine tune, evaluate, and optimize models to ensure accuracy, performance, and business relevance.

MLOps specialists manage model lifecycle, CI CD pipelines, version control, monitoring, and automated retraining in production.

DevOps engineers architect scalable, resilient, cloud native infrastructure with proper GPU orchestration and auto scaling.

Security engineers enforce encryption, access control, compliance mapping, and audit logging across the AI stack.

Domain consultants translate business requirements into deployable AI workflows aligned with industry regulations and operational realities.

Product managers align AI initiatives with measurable outcomes, roadmap priorities, and stakeholder expectations.

Avoid vendors who rely heavily on freelance prompt engineers without infrastructure depth:

Vendors dependent primarily on freelance prompt engineers typically lack the architectural, operational, and governance maturity required for enterprise scale.

In 2026, AI deployment is multidisciplinary

In 2026, successful AI deployment requires coordinated expertise across models, infrastructure, security, compliance, and business integration rather than isolated technical experimentation.

Assess Long Term Strategic Alignment

| Evaluation Area | What to Examine | Why It Matters |

|---|---|---|

| Product Roadmap Transparency | Clear multi year roadmap with version plans and upgrade paths | Ensures platform stability and avoids reactive development |

| Research Investment | Dedicated AI research team and published advancements | Indicates long term innovation capacity rather than short term resale strategy |

| Partnerships with Hyperscalers | Formal partnerships with major cloud providers | Strengthens scalability, compliance alignment, and infrastructure reliability |

| Open Source Contribution | Active contributions or maintenance of open source projects | Signals technical depth and ecosystem credibility |

| Geographic Presence | Regional offices or deployment capabilities | Supports data residency compliance and local enterprise support |

| Support SLAs | Defined uptime guarantees, response times, and escalation processes | Reduces operational and downtime risk |

Indicators of a Long Term AI Platform Builder

| Strategic Investment Area | Expected Evidence |

|---|---|

| Continuous Model Benchmarking | Regular performance evaluation across models and workloads |

| Governance Tooling | Built in compliance dashboards, audit logs, and risk controls |

| Scalable Architecture | Multi region deployment capability with defined RTO and RPO |

| Enterprise Support Systems | Dedicated account management, technical support tiers, and change management processes |

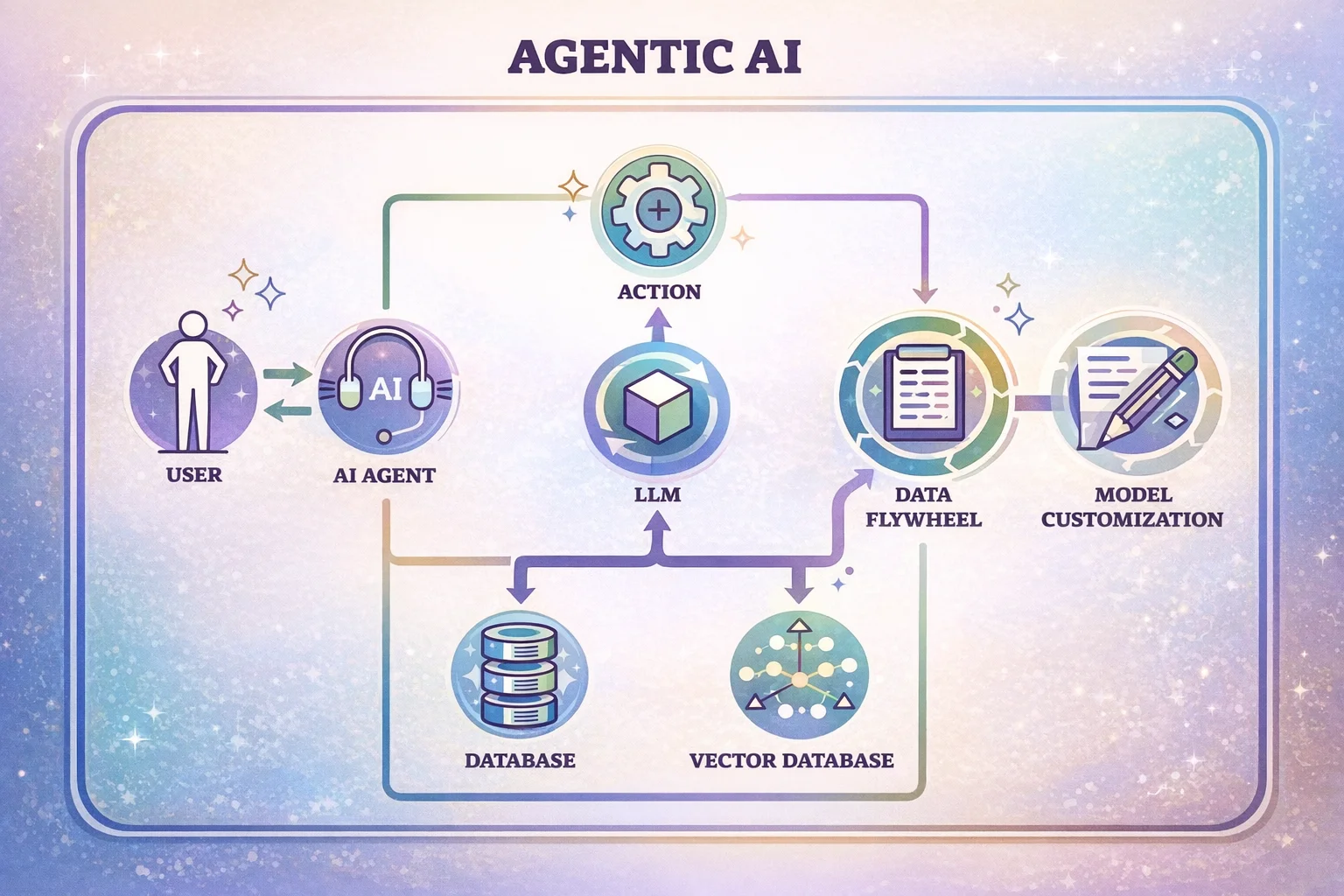

Review AI Agent and Automation Readiness

When evaluating modern AI vendors, enterprises must assess agentic capability beyond basic prompt response systems. Vendors should demonstrate support for multi step reasoning, enabling structured decision chains rather than single turn outputs; robust tool calling frameworks that allow models to interact with APIs, databases, and enterprise systems; orchestrated workflows that coordinate multiple tasks and services; event based triggers that respond dynamically to business events; and human in the loop validation to ensure governance, accuracy, and compliance.

Modern AI vendor evaluation must explicitly include agentic capability assessment, as enterprise deployments increasingly depend on autonomous yet controlled execution.

Red Flags Enterprises Should Avoid

Black box architecture with no documentation

Vendors that cannot provide clear architecture diagrams, deployment workflows, and technical documentation introduce operational and governance risk.

Over reliance on one foundation model

Dependence on a single foundation model creates vendor lock in, cost volatility, and limited flexibility in performance optimization.

No compliance certifications

Lack of recognized compliance certifications signals weak governance controls and increased regulatory exposure.

No MLOps structure

Absence of formal MLOps processes suggests poor model versioning, monitoring, and lifecycle management in production.

No disaster recovery plan

Without a documented disaster recovery strategy with defined RTO and RPO, business continuity cannot be guaranteed.

Over promising ROI without benchmarks

Claims of high ROI without measurable benchmarks or case data indicate marketing driven projections rather than validated performance.

No security whitepaper

Failure to provide a detailed security whitepaper reflects insufficient transparency around encryption, access controls, and risk mitigation.

No audit trail support

Systems that lack audit logging and traceability undermine compliance, accountability, and forensic investigation capability.

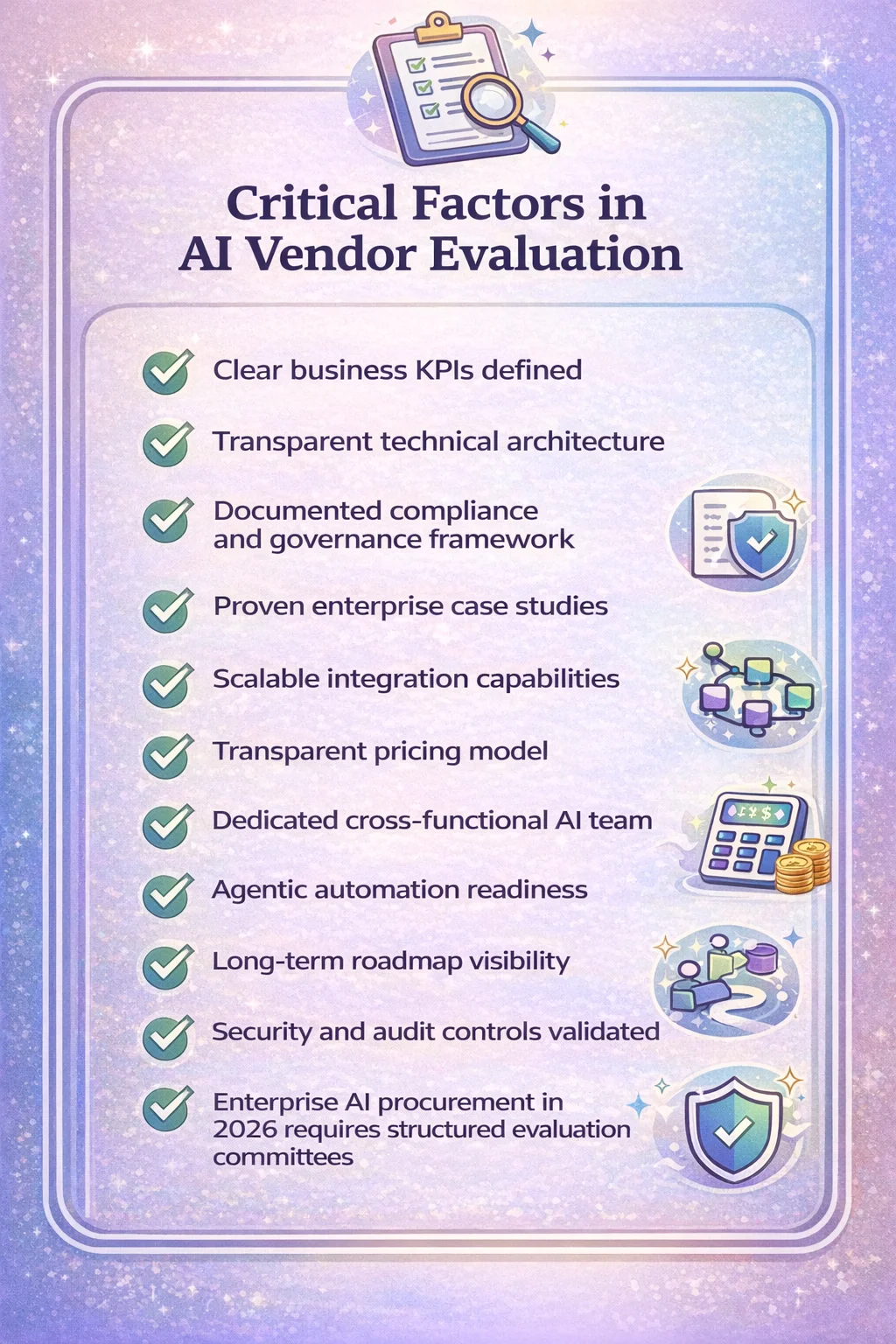

Enterprise AI procurement in 2026 requires structured evaluation committees, not informal demos:

Enterprise AI procurement in 2026 demands cross functional evaluation committees with technical, security, compliance, and business stakeholders rather than relying on persuasive demonstrations alone.

Enterprise AI Vendor Evaluation Checklist for 2026

The Strategic Shift in 2026 – Enterprise AI Workflow Evolution

Stage 1 – Experimentation (2023)

AI used for isolated use cases, internal testing, and innovation labs with minimal governance.

Stage 2 – Pilot Programs (2024)

Controlled deployments within departments, validating feasibility and short term ROI.

Stage 3 – Selective Integration (2025)

AI embedded into specific workflows such as support, analytics, or automation with measurable business impact.

Stage 4 – Infrastructure Era (2026)

AI becomes core digital infrastructure, embedded across systems, governed, monitored, and scaled like cloud or ERP platforms.

Evaluation Maturity Must Evolve

If AI is treated like standard SaaS procurement, enterprises risk:

→ Model drift

→ Governance gaps

→ Cost overruns

→ Compliance exposure

If AI is treated as digital infrastructure, enterprises gain:

→ Operational leverage

→ Competitive advantage

→ Sustainable ROI

→ Faster innovation cycles

Strategic takeaway: In 2026, AI is not a tool purchase. It is infrastructure architecture. Vendor evaluation must reflect that shift.

Download the Enterprise AI Vendor Evaluation Template

Get a structured AI vendor comparison framework tailored for CIOs and procurement teams.

Final Thoughts

AI vendor evaluation in 2026 is no longer about capability demonstration. It is about production reliability, compliance maturity, integration depth, and long term strategic fit. Enterprises that implement structured AI vendor assessment frameworks will reduce risk and maximize long term AI value. AI is infrastructure now. Choose accordingly.