The Companies That Fix Execution Will Win the AI Race

A lot of companies have already started building AI.

Some have chatbots.

Some have dashboards.

Some have “AI-powered” workflows.

On the surface, everything looks fine.

But when we went deeper, a different picture showed up.

We recently audited 10 AI systems across companies.

Only 2 were actually working in production.

The rest were not broken in obvious ways.

They were failing quietly.

And that is the real problem.

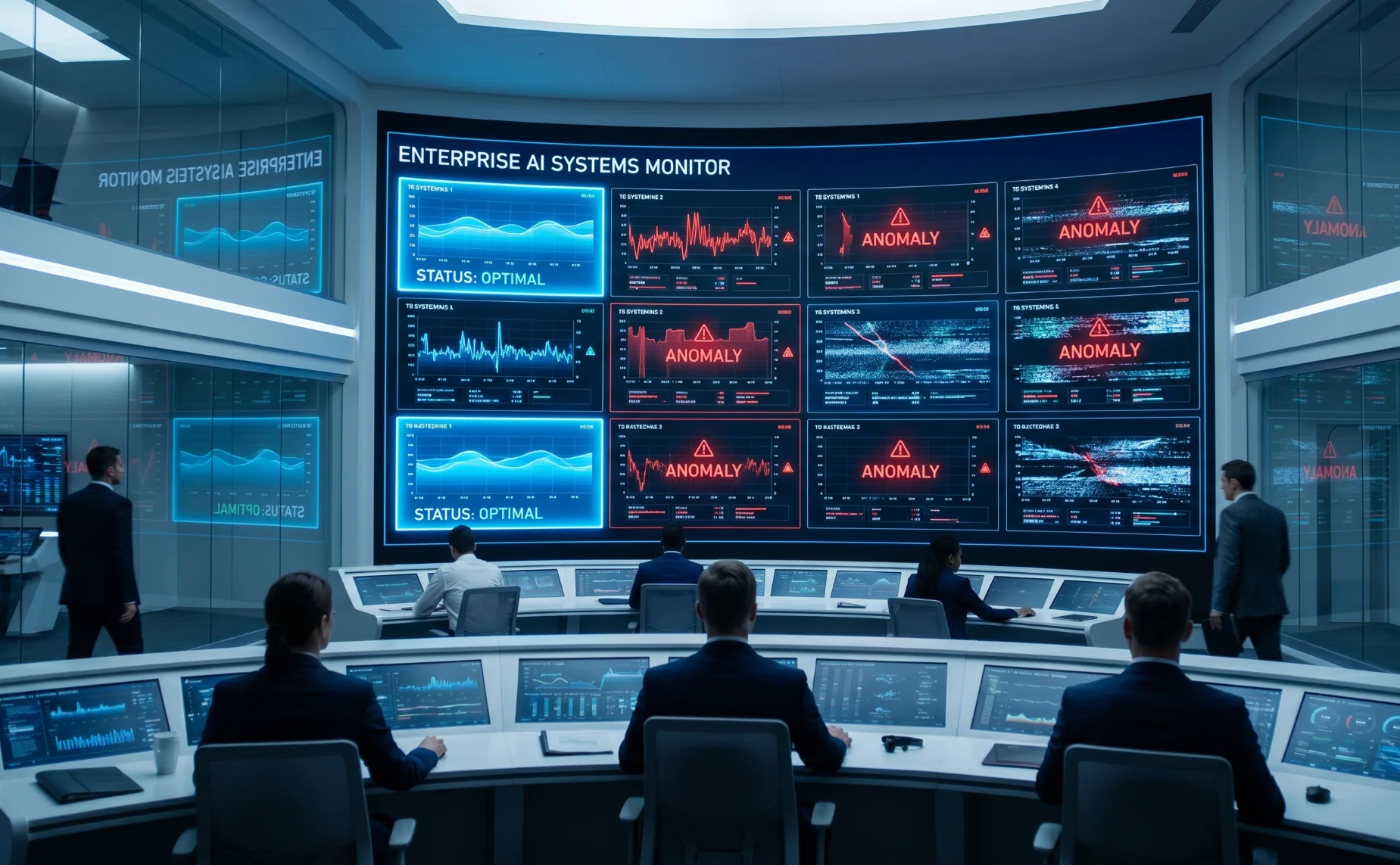

Why AI Failure Is Hard to Notice

Most companies expect failure to look like this

The model does not work.

But real failure looks like this

The system runs

But no one fully trusts it

Outputs are used sometimes

But not relied on

Decisions are still made manually

The AI exists

But it does not drive the business

That is failure.

What Went Wrong in 8 Out of 10 Systems

The system was built for presentation

Not for real-world usage

Clean datasets

Perfect inputs

No edge cases

When real data came in

Performance dropped immediately

AI is not just a model

It is a pipeline

What we saw

Manual uploads

Broken integrations

Delayed data flow

Without data flow

AI becomes irrelevant

Most systems were static

No retraining

No learning

No correction

AI without feedback stops improving

And slowly becomes outdated

This was the most expensive mistake

Trying to solve everything

Instead of one clear problem

Using AI where rules would work better

No clear success metric

AI was added because it sounded right

Not because it was needed

Once deployed

The system was left alone

No monitoring

No alerts

No responsibility

Over time

Performance dropped

And no one noticed

We saw systems using

LLMs

RAG

Vector databases

AI agents

But

No clear architecture

No optimization

No alignment to business need

Complexity increased

Value did not

This showed up strongly in operations-heavy businesses

System says one thing

Reality shows another

Inventory mismatch

Delays not captured

Exceptions ignored

AI was not connected to ground reality

So it could not solve real problems

No baseline

No comparison

No financial impact

Without ROI tracking

AI becomes a cost

Not an investment

What the 2 Successful Systems Did Differently

They were not more advanced

They were more focused

Clear problem

Strong data pipeline

Continuous feedback

Tight integration with operations

Measurable outcomes

They treated AI as a system

Not a feature

Already building AI but something feels off

What a Real AI System Should Look Like

A working AI system should

Solve a clear business problem

Connect directly to workflows

Handle messy real-world data

Continuously improve

Be measurable in impact

Because AI does not create value in isolation

It creates value inside operations

What CEOs Should Ask Today

Before investing further in AI

Ask these questions

Is the system used daily

Do teams trust it

Is it connected to real workflows

Can we measure its impact

If the answer is unclear

Something is broken

The Biggest Insight From These Audits

The problem is not AI

The problem is execution

Most teams can build demos

Very few can build production systems

That gap is where most AI investments fail

Connect with Nyx Wolves and fix your AI execution

If you already have an AI system and it is not delivering as expected

We can audit it and show you exactly

How Nyx Wolves Helps

At Nyx Wolves, we work with companies to

Audit existing AI systems

Identify where they are breaking

Fix architecture and pipelines

Rebuild systems for production readiness

Align AI with real business outcomes

Because AI should not stay as an experiment

It should drive real results