Introduction

Artificial intelligence has become a top priority for enterprises worldwide. Boardrooms discuss AI strategy, innovation teams experiment with models, and executives approve ambitious AI roadmaps.

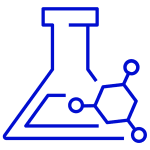

Yet a persistent challenge continues to derail many organizations: AI initiatives rarely make it to production.

Enterprises often celebrate proof-of-concept demonstrations and pilot projects, but when it comes to deploying reliable AI systems into real business workflows, progress slows dramatically.

This gap between experimentation and real deployment is what many leaders now call the Enterprise AI Execution Gap.

In this article, we explore why organizations struggle to operationalize AI and what leaders can do to bridge the gap between experimentation and real business impact.

We will cover:

- Why enterprise AI projects stall before production

- Structural barriers that prevent AI execution

- A practical framework for moving from AI ideas to deployed systems

For CTOs, product leaders, and AI strategists, understanding this execution gap is essential to realizing the true value of AI investments.

Why the AI Execution Gap Matters Now

In the early days of AI adoption, experimentation was enough. Organizations could showcase innovation through pilots and internal prototypes.

Today the expectations are different.

AI is no longer an experimental initiative. It is becoming part of core digital infrastructure, driving automation, decision making, and customer experience.

When AI projects fail to reach production, the consequences are significant:

- Innovation budgets get wasted on experiments that never scale

- Engineering teams lose confidence in AI initiatives

- Leadership struggles to justify continued AI investment

Research across enterprise technology programs shows that more than half of AI pilots never transition into production systems.

This means organizations are investing heavily in AI research while capturing only a fraction of its potential value.

The organizations that succeed with AI are not necessarily those experimenting the most. They are the ones that build reliable execution pipelines for deploying AI systems.

AI Success Is Not About Models Alone

Many enterprises assume that AI success depends primarily on building better models.

In reality, production AI systems depend on an entire ecosystem of capabilities beyond model development.

Enterprise AI systems require:

AI systems depend on reliable data pipelines that continuously ingest, clean, and structure data from multiple sources.

Without well maintained data infrastructure, even the most advanced models cannot function reliably.

Moving from experimentation to production requires deployment infrastructure including:

- model versioning

- automated retraining pipelines

- containerized inference services

Without these components, AI models remain trapped in research environments.

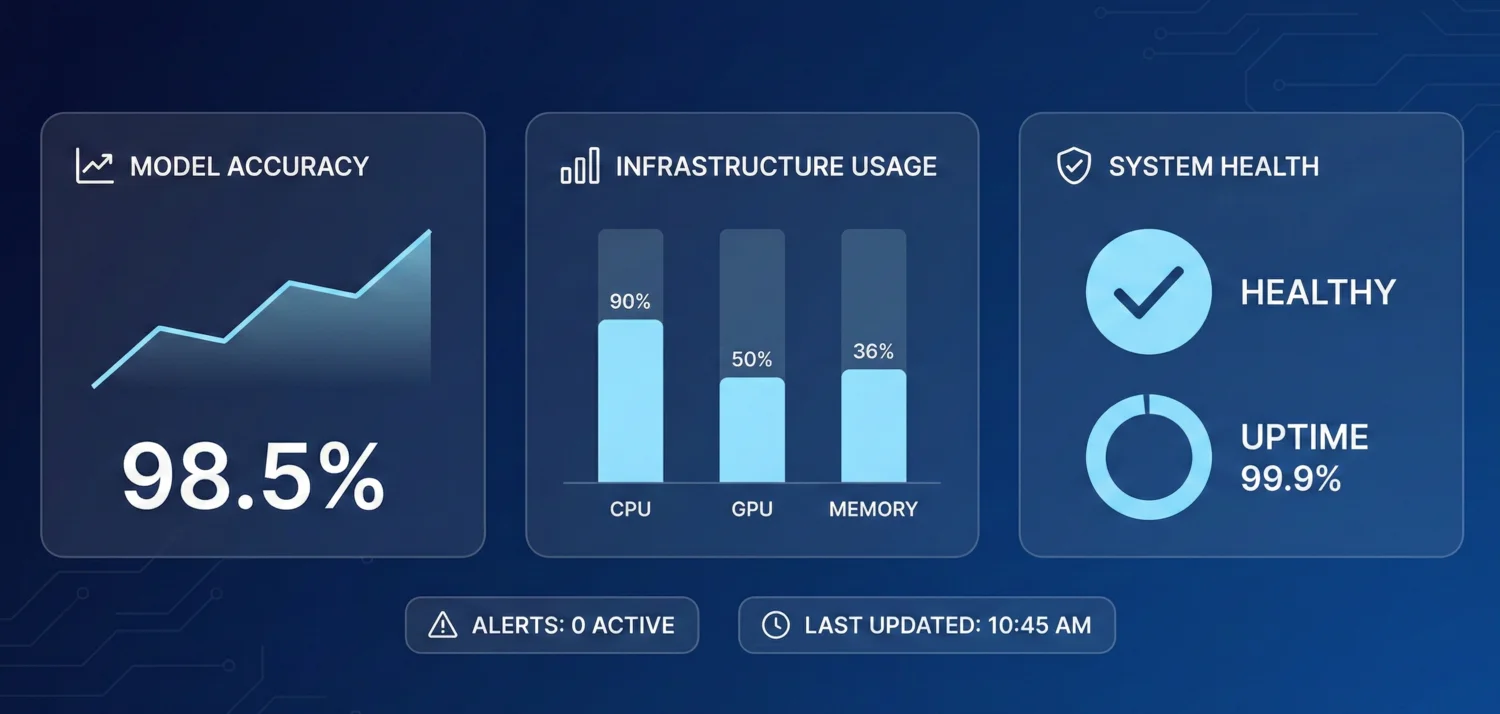

Production AI systems must be continuously monitored to detect:

- model drift

- data distribution changes

- performance degradation

This monitoring layer is essential to maintain trust in AI systems over time.

AI delivers value only when it is embedded inside operational systems.

This may include:

- CRM systems

- ERP platforms

- logistics platforms

- customer service applications

If AI outputs remain isolated from operational tools, the system cannot influence real decisions.

Successful AI deployment requires collaboration between multiple teams:

- engineering

- data science

- product

- operations

- finance

When these teams operate in isolation, AI initiatives struggle to move beyond experimentation.

Why Enterprises Struggle to Execute AI Projects

The AI execution gap is not caused by a single technical challenge. It emerges from a combination of organizational and operational barriers.

AI projects often begin inside innovation teams or data science groups.

However, production systems require coordination across multiple departments.

When ownership is unclear, projects stall during deployment.

Many enterprise IT environments were designed for traditional software systems rather than AI workloads.

AI systems require infrastructure that supports:

- large scale data processing

- GPU based training

- real time inference pipelines

Without these capabilities, deployment becomes slow and expensive.

Data scientists can build models, but deploying production systems requires AI engineering expertise.

These engineers specialize in:

- model deployment

- MLOps pipelines

- scalable inference infrastructure

The shortage of experienced AI engineers is one of the biggest bottlenecks in enterprise AI adoption.

Enterprise procurement processes often move slowly when adopting new AI tools.

Teams experimenting with AI may rely on small experimental environments that cannot scale.

When projects attempt to transition into production infrastructure, governance delays slow progress significantly.

Executives often struggle to measure the return on AI initiatives.

When ROI is unclear, projects remain stuck in pilot phases instead of receiving full investment for production deployment.

The Real Consequences of the AI Execution Gap

When AI projects fail to reach production, organizations face multiple long term consequences.

| Impact Area | What Happens | Business Consequences |

|---|---|---|

| Innovation Stalls | AI projects remain stuck in pilot stage | Delayed product innovation |

| Talent Frustration | Engineers work on experiments that never deploy | Reduced team motivation |

| Strategic Risk | Competitors deploy AI faster | Market advantage lost |

| Budget Waste | AI investments produce limited impact | Reduced executive confidence |

Over time, these challenges lead organizations to become cautious about AI investments, slowing innovation even further.

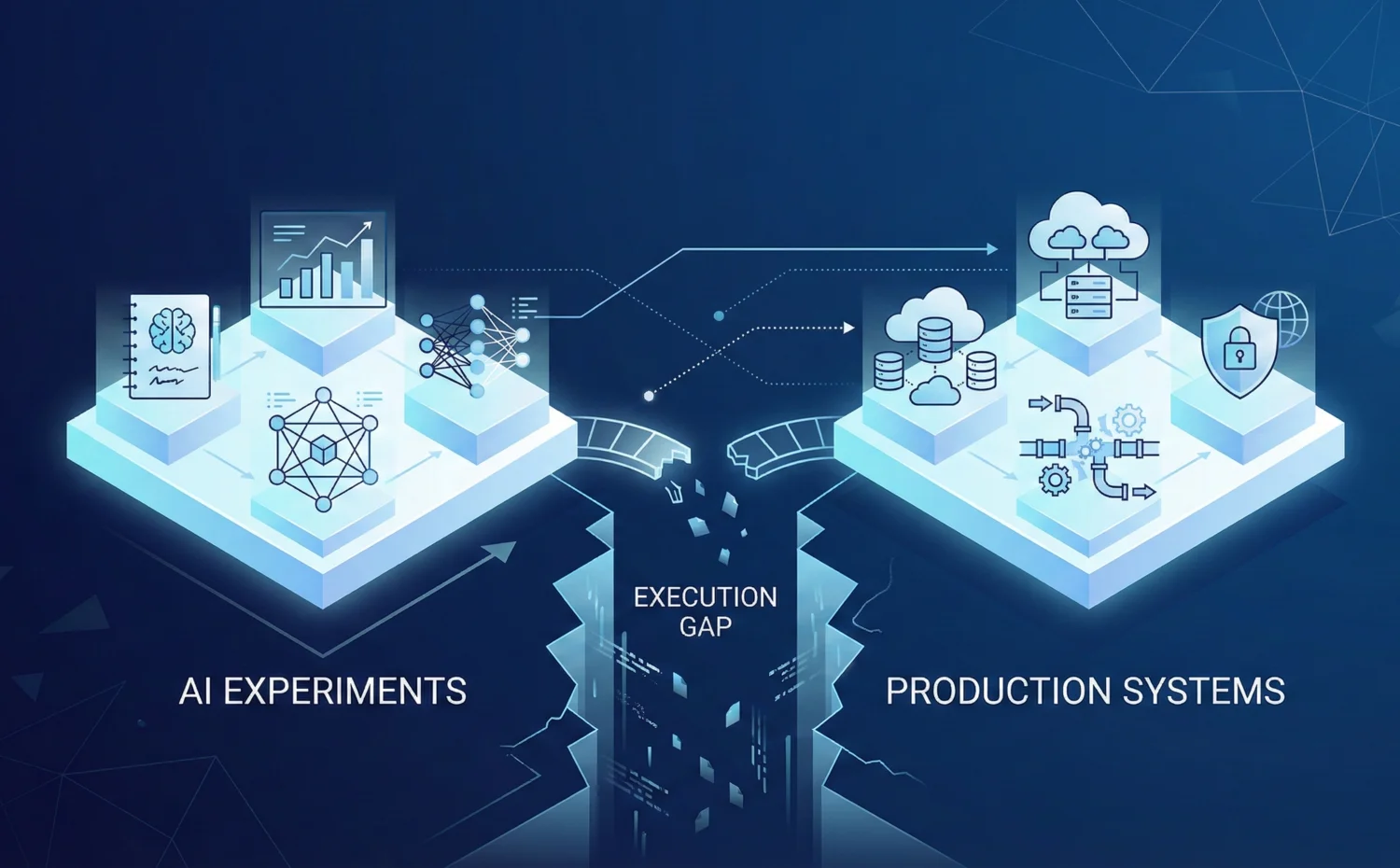

Five Steps to Bridge the AI Execution Gap

Organizations that successfully operationalize AI typically follow a structured approach to execution.

Below is a practical framework for moving from AI experimentation to production systems.

Objective: Ensure AI initiatives target real operational problems.

Actions:

- Identify high impact business processes

- Define measurable outcomes for AI systems

- Align AI initiatives with strategic goals

Outputs:

- clearly defined AI use cases

- success metrics for deployment

Objective: Create infrastructure capable of supporting AI workloads.

Actions:

- implement scalable data pipelines

- deploy GPU enabled compute environments

- establish model deployment pipelines

Outputs:

- reliable AI infrastructure

- scalable model training and inference systems

Objective: operationalize AI models through automation.

Actions:

- automate model training workflows

- implement model version control

- deploy monitoring and alerting systems

Outputs:

- repeatable deployment pipelines

- stable production AI systems

Objective: ensure AI outputs influence real workflows.

Actions:

- integrate models with enterprise applications

- embed AI insights into operational dashboards

- automate decision workflows

Outputs:

- AI driven processes

- measurable operational improvements

Objective: sustain AI execution across the organization.

Actions:

- implement AI governance frameworks

- monitor performance and ROI

- scale successful AI initiatives across departments

Outputs:

- enterprise AI maturity

- predictable innovation pipelines

How AI Leaders Should Communicate Execution Strategy

AI leaders must communicate that AI success depends on execution systems rather than isolated experiments.

Executives should frame AI investments around:

- production impact rather than research milestones

- measurable operational improvements

- scalable infrastructure rather than isolated pilots

When leadership focuses on execution rather than experimentation, AI initiatives gain momentum across the organization.

Tools That Support AI Execution

Several platforms help enterprises operationalize AI systems at scale.

Examples include:

An open source platform for managing machine learning pipelines on Kubernetes infrastructure.

A widely used framework for managing model lifecycle including experimentation, deployment, and monitoring.

Provides experiment tracking and model performance monitoring for large scale AI development teams.

A workflow orchestration system commonly used for managing complex data pipelines and model retraining schedules.

These tools help organizations move from fragmented experiments to structured AI delivery pipelines.

Future Outlook

The next wave of enterprise AI adoption will not be defined by who experiments the most with AI.

It will be defined by who can operationalize AI at scale.

Organizations that solve the execution gap will build repeatable pipelines for deploying AI systems across products, operations, and customer experiences.

Those that fail to bridge this gap will continue to produce impressive prototypes without realizing their full business value.

Conclusion

The enterprise AI execution gap remains one of the biggest barriers to realizing the value of artificial intelligence.

Many organizations invest heavily in AI experimentation but struggle to translate prototypes into production systems.

Bridging this gap requires more than better models. It requires infrastructure, engineering discipline, and organizational alignment.

When enterprises focus on building strong execution frameworks, AI transitions from isolated innovation projects into a powerful engine for business transformation.